The Evolution of Template Matching: From Pixel-Based to Deep Learning

How do you find the same object in two images? The simplest approach is to compare pixels directly. However, this fails when lighting changes or camera angles differ.

This article explains how template matching technology has evolved from pixel-based to hand-crafted features to deep learning.

Evolution Summary

| Stage | Representative Technology | Characteristics | Limitations |

|---|---|---|---|

| Stage 1 | SSD, SAD, NCC | Direct pixel comparison | Vulnerable to scale/rotation/lighting changes |

| Stage 2 | SIFT, ORB | Hand-crafted keypoints | Vulnerable to textureless regions, repetitive patterns |

| Stage 3 | SuperPoint | Deep learning keypoint detection | Matching still uses traditional methods |

| Stage 4 | SuperGlue | Deep learning keypoint matching | Requires GPU |

Stage 1: Pixel-Based Template Matching

Slide the template image across the target image and calculate similarity.

Similarity Measurement Methods

| Method | Formula | Characteristics |

|---|---|---|

| SSD (Sum of Squared Differences) | Lower is more similar | |

| SAD (Sum of Absolute Differences) | Faster than SSD | |

| NCC (Normalized Cross-Correlation) | Normalized correlation coefficient | Robust to brightness changes |

OpenCV Implementation: cv2.matchTemplate()

Limitations of Pixel-Based Methods

- Scale Changes: Fails when template and target sizes differ

- Rotation Changes: Cannot recognize rotated objects

- Lighting Changes: Similarity degrades with brightness differences

- Occlusion: Fails when parts are hidden

Stage 2: Hand-Crafted Features

Instead of pixels, extract and compare keypoints.

SIFT (2004)

Scale-Invariant Feature Transform

- Scale Space Construction: DoG (Difference of Gaussian) pyramid

- Keypoint Detection: Local maxima/minima points

- Descriptor Generation: Gradient histogram-based 128-dimensional vector

Achievement: Scale invariance, rotation invariance

Speed Improvement Attempts After SIFT

SIFT was accurate but slow. Several attempts were made to improve this:

| Algorithm | Year | Key Idea | Limitations |

|---|---|---|---|

| SURF | 2006 | Hessian-based detection + Integral Image, several times faster than SIFT | Patent issues |

| BRIEF | 2010 | Introduced binary descriptors, generates descriptors from pixel pair comparisons only | No rotation invariance |

| BRISK | 2011 | Extended BRIEF to be scale invariant | Still speed limited |

These attempts were synthesized into ORB.

ORB (2011)

Oriented FAST and Rotated BRIEF

| Component | Description |

|---|---|

| FAST | Corner detector + pyramid for scale handling |

| rBRIEF | Rotation-invariant binary descriptor |

| Matching | Hamming distance (XOR operation) |

Advantages:

- Tens of times faster than SIFT/SURF

- No patents

- De facto standard for real-time SLAM/AR

Limitations of Hand-Crafted Features

- Textureless Regions: Keypoint detection fails

- Repetitive Patterns: Many similar keypoints lead to mismatches

- Extreme Lighting Changes: Descriptor deformation

Stage 3: Deep Learning Keypoint Detection - SuperPoint (2018)

A self-supervised learning based keypoint detector developed by MagicLeap.

Training Method

Problem: How to obtain ground truth labels for keypoints?

Solution: Two-stage training

| Stage | Name | Method |

|---|---|---|

| Stage 1 | MagicPoint | Train on synthetic images (lines, triangles, rectangles) with corners/intersections as GT |

| Stage 2 | Homographic Adaptation | Apply multiple homography transformations to real images and accumulate detected keypoints |

Network Output

A single CNN outputs two things simultaneously:

- Keypoint Probability Map: Probability of each pixel being a keypoint

- Dense Descriptor Map: 256-dimensional descriptors

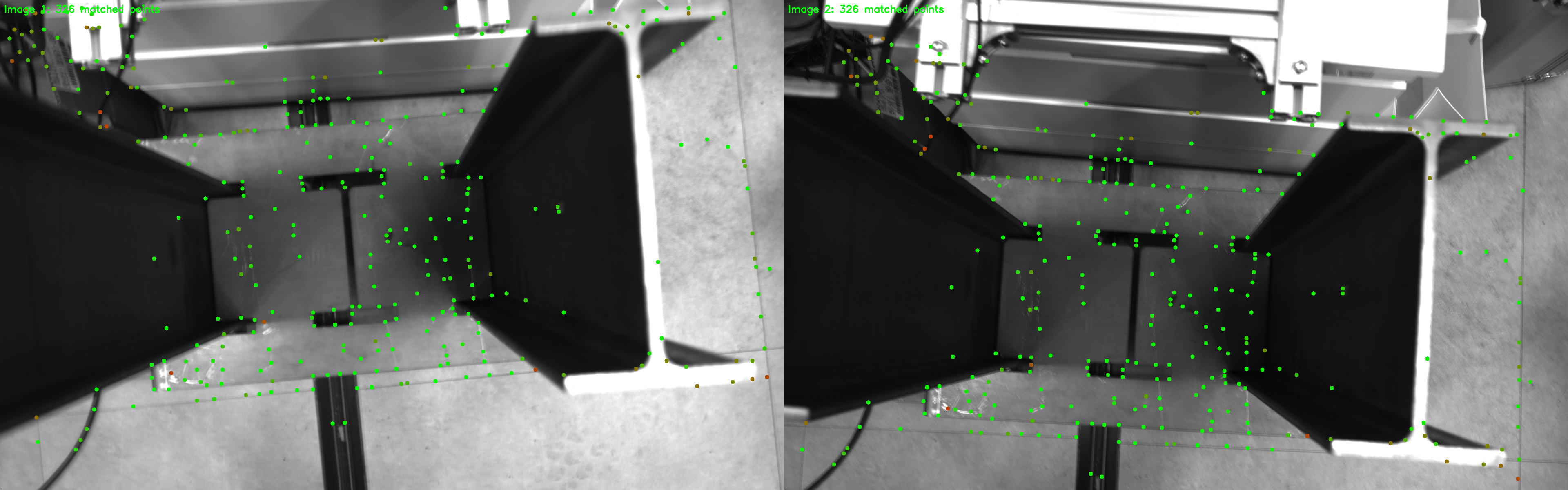

SuperPoint Keypoint Detection Results

Keypoints detected by SuperPoint in two images (green dots). Concentrated in texture-rich areas

Observations:

- Keypoints concentrated on edges and corners of metal structures

- Textureless areas (walls, floors) automatically avoided

- Only meaningful keypoints selectively detected

Stage 4: Deep Learning Keypoint Matching - SuperGlue (2020)

A Graph Neural Network based matching network developed by MagicLeap.

Problems with Traditional Matching

Traditional matching pipeline:

Nearest Neighbor -> Ratio Test -> RANSAC (outlier removal)

Limitations:

- Many mismatches in repetitive patterns

- Depends on RANSAC for post-processing

- Does not use context information

SuperGlue's Approach

Define matching as a graph problem:

- Keypoint Encoding: Position + visual descriptor

- Self-Attention: Learn relationships within the same image

- Cross-Attention: Learn relationships between two images

- L iterations: Repeat Attentional GNN layers

Optimal Transport (Sinkhorn)

Apply Optimal Transport algorithm for final matching:

- Each keypoint matches with at most one counterpart

- Dustbin Concept: Explicit handling of unmatchable points (occlusion, single view)

SuperGlue Matching Results

Keypoint pairs matched by SuperGlue (326 matches from 455, 542 keypoints). Color indicates matching confidence

Observations:

- Geometric Consistency: Almost all matching lines show consistent direction

- No Crossing Lines: Extremely few outliers

- Dustbin Working: Not all keypoints are matched (handling occluded regions)

Method Comparison

Speed vs Accuracy

Speed: ORB >>>>>> SIFT > SuperPoint > SuperPoint+SuperGlue

Accuracy: ORB < SIFT < SuperPoint < SuperPoint+SuperGlue

Use-Case Recommendations

| Use Case | Recommended Method | Reason |

|---|---|---|

| Real-time (30fps+) | ORB | Speed priority, no GPU needed |

| Mobile/Edge | ORB or lightweight SuperPoint | Resource constraints |

| Maximum Accuracy | SuperPoint + SuperGlue | Deep learning precision |

| Extreme Lighting Changes | SuperPoint + SuperGlue | Learning-based robustness |

| Repetitive Patterns | SuperGlue required | Context-based distinction |

| Rapid Prototyping | SIFT/ORB | Built into OpenCV, easy setup |

Application Areas

| Field | Related Technology |

|---|---|

| Visual SLAM | Camera pose estimation |

| AR Tracking | Virtual object alignment |

| 3D Reconstruction | Structure recovery from multi-view images |

| Image Stitching | Panorama generation |

| Robot Navigation | Environment recognition |

| Industrial Inspection | Defect detection |

Key Takeaways

-

Pixel-based matching is vulnerable to scale, rotation, and lighting changes. Only usable in simple environments.

-

SIFT achieved scale/rotation invariance but is computationally slow. ORB is tens of times faster and patent-free, making it the standard for real-time SLAM.

-

SuperPoint solves the labeling problem through self-supervised learning and detects only meaningful keypoints.

-

SuperGlue performs context-based matching using GNN + Optimal Transport. It has few outliers without RANSAC, and explicitly handles unmatchable points with Dustbin.

-

Selection Criteria:

- Need real-time -> ORB

- Need maximum accuracy -> SuperPoint + SuperGlue

- Repetitive pattern environment -> SuperGlue required

Template matching is a versatile technology that "works everywhere." Choose the method that fits your problem requirements.